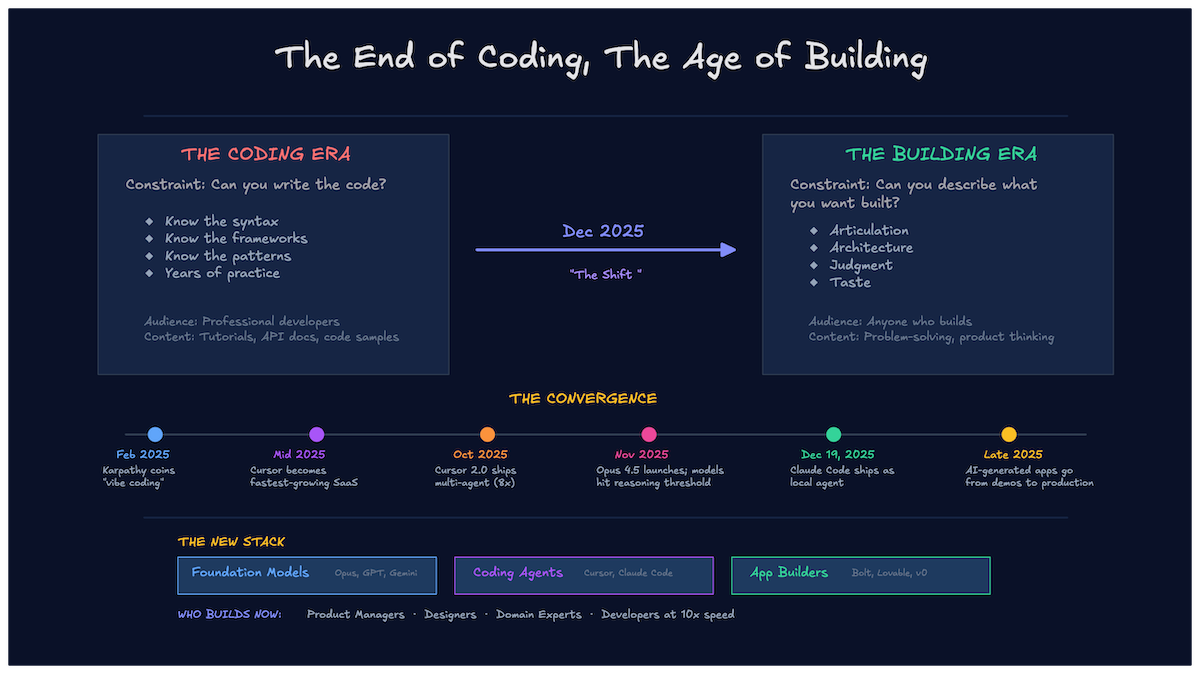

The constraint moved. For decades, the thing that determined whether software got built was whether someone could write the code. You needed syntax, framework knowledge, years of pattern recognition. That's not the constraint anymore. The constraint is whether you can describe what you want built, evaluate what comes back, and make good decisions about what to do next.

This didn't happen gradually. A set of tools crossed a threshold within about six months, and the gap between "I have an idea" and "I have a working prototype" collapsed from weeks to hours.

What happened in late 2025

The shift wasn't one tool getting better. It was five or six things converging at once.

Cursor shipped background agents in late 2025, letting multiple AI processes work across a codebase simultaneously. One agent refactors your auth layer while another writes tests for the module you just finished. Parallel execution against a shared codebase, with conflict resolution built in. That's a different editing model than autocomplete.

Anthropic released Opus 4.5 and a generation of RLVR-trained models that crossed a reasoning threshold. Earlier models could write functions. These models could hold architectural context across a 50-file project, understand why a particular abstraction existed, and make changes that respected the existing design. The gap between "generates code" and "understands the codebase" closed.

Claude Code started running as a local CLI, reading your file system, running your tests, iterating on failures without round-tripping through a browser. It turned a chat interface into something closer to a junior developer sitting next to you, except it could work through an entire debugging session in minutes.

And the browser-based builders matured. Bolt, Lovable, and v0 went from generating impressive demos to generating deployable applications. Not always clean applications. Not always production-grade. But working software that a non-developer could ship to real users, connected to real databases, with real authentication flows.

Each of these alone was incremental. Together, they moved the floor.

The coding era versus the building era

The coding era rewarded a specific set of skills. You needed to know that Python dicts are hash maps, that React re-renders on state change, that SQL joins have performance implications at scale. Years of practice built an intuition for debugging, architecture, and language-specific idioms. The work was writing code, and the quality of the code depended on your accumulated experience writing it.

The building era rewards a different set. Articulation matters more than syntax. Can you describe the data model clearly enough that an AI generates the right schema? Do you know that this feature needs async processing instead of a synchronous call, even if you've never wired it up yourself? And when the AI generates three approaches, can you tell which one breaks at scale? The skills shifted from implementation to specification and evaluation.

The difference shows up in specific scenarios. A marketing ops manager needed an internal tool to sync campaign data between HubSpot and their reporting dashboard. In the coding era, that's a ticket in Jira, a sprint planning conversation, two weeks of developer time. In the building era, she described the sync logic to Claude Code, iterated on the output for an afternoon, and had a working tool by end of day. Because the constraint moved from implementation to specification.

A designer prototyping a dashboard in Figma used to hand off static mockups to a frontend team. Now the prototype becomes the product. v0 takes the design, generates the React components, and the designer iterates on the actual code output. The handoff didn't get faster. The handoff disappeared.

Who builds now

The audience expanded, but not in the way the "everyone is a developer" crowd claims. A product manager shipping an internal tool isn't a developer. A designer generating React components from a prototype isn't a frontend engineer. They're building software, but they're doing it through a different interface than a code editor and a terminal.

The people making product decisions can now execute on them directly. That's the actual shift. A domain expert who understands the problem space deeply can build the first version of a solution without translating their knowledge through a development team. The translation layer got thinner.

Developers didn't become less important. They became faster. A senior engineer using Cursor with background agents can move through a codebase at a pace that wasn't possible two years ago. The tedious parts shrink. The judgment parts stay. A staff engineer reviewing AI-generated code still needs to spot the subtle concurrency bug, the missing index, the abstraction that will break when requirements change. That work didn't go anywhere.

What this means for developer content

If you're writing tutorials that start with "First, install the SDK and initialize a client," you're writing for a shrinking audience. Not shrinking to zero, but narrowing. A growing segment of builders thinks in prompts, not imports. They describe what they want, evaluate the output, and iterate. The SDK installation happens inside that loop, handled by the AI, often without the builder knowing which package manager ran.

This doesn't mean content gets dumber. It means the frame shifts. Instead of "How to implement authentication with NextAuth," the useful article becomes "How to think about authentication for a SaaS app." What are the tradeoffs between session-based and token-based auth? When does OAuth make sense versus magic links? What are the actual security implications of each choice?

The content that holds value is the content that helps someone make decisions, not the content that walks them through keystrokes. Implementation guides aren't dead, but they're commoditized. The AI can generate a NextAuth setup. What it can't do is tell you whether NextAuth is the right choice for your specific situation.

What didn't change

Architecture decisions still require someone who understands distributed systems and scaling characteristics. AI can generate a microservices setup, but it doesn't know whether your team of four should be running microservices or a monolith. It doesn't know your deployment constraints, your on-call rotation, or the operational complexity your team can absorb.

Judgment still matters. AI generates plausible code quickly. It also generates plausible bugs quickly. Someone needs to review the output, understand the failure modes, and catch the cases where the AI optimized for the wrong thing. A function that passes all tests but handles errors by swallowing them silently is worse than a function that doesn't compile, because at least the compiler error is honest.

Senior developers aren't less valuable. They're more leveraged. The gap between a senior engineer with AI tools and a junior engineer with AI tools is wider than the gap was without AI. The senior knows what to ask for and knows when the output is wrong. The ceiling didn't move. The skills that make senior engineers valuable are the same ones AI can't replicate.

The career path question

Here's the part nobody has a good answer for: what does a junior developer career path look like when the entry-level work is automated?

Junior roles traditionally existed as training grounds. You wrote CRUD endpoints, fixed CSS bugs, added form validation. Those tasks built intuition for how systems work, how code breaks, and how to debug when something doesn't behave. That intuition feeds the judgment that makes senior engineers valuable. The entry-level work wasn't just work. It was education.

If AI handles the CRUD endpoints and the form validation and the CSS bugs, the question isn't whether juniors are needed. It's how they develop the judgment that AI can't replace. The obvious answer is "they'll learn by reviewing AI output instead of writing code from scratch." Maybe. But reviewing code you don't fully understand is a different skill than writing it, and it's not clear it builds the same depth of understanding.

This is an open question, and I don't think anyone has an honest answer yet. The tools moved faster than the career structures adapted. Companies are still hiring for roles defined by the coding era while the work increasingly belongs to the building era. That mismatch will resolve, but how it resolves will shape the next generation of engineers.